Let's Start a Chat About ChatGPT, AI in Government

It's here, it's here to stay, and we need to work out best practices. Let the GGF conversation begin.

Where to start with the use of Artificial Intelligence in government work? Well, for one, it’s already here. Do your finance folks use Excel to crunch numbers? Of course they do. So your organization is using AI. Just not the scary or controversial AI that can occasionally make mistakes (see “Hallucinations” below).

We’re seeing all sorts of exciting (and not scary) uses of AI in government. We’ve got robots mowing grass and striping athletic fields; your city or county has likely been using computer-aided dispatch (CAD) for years to assist call-takers in conveying vital information to field personnel, ensuring quicker response and increasing situational awareness; the federal government even has a website, ai.gov, with a section dedicated to showing how it is using AI to better serve the public.

It’s exciting to see the rapid adoption of AI tools in the public sector to innovate, increase efficiency and enhance services. These tools will undoubtedly become more prevalent, much in the same way the internet and social media went from nerdy curiosities to mainstays. (Younger readers may not believe there was a time before social media; trust me, I was there.)

The difference between AI and social media is the former is being rapidly adopted, while the latter took some time. I can assure you that back in the day we didn’t see all the risks that social media would present. Frankly, I think social media has been a net negative for governing. There’s been lots of good, to be sure; but I don’t really recall anyone foreseeing the way platform algorithms would create information bubbles that kept users from being exposed to different viewpoints, to say nothing of the widespread dissemination of “alternative facts.”

So, we should wade into AI with our eyes wide open. We’ll start with some thoughts from Dr. Jacque Lambiase, friend of GGF and director of TCU’s Certified Public Communicator program. I’ve heard Jacque speak a couple of times on AI, most recently at SGR’s HR Collaborative networking webinar on Jan. 18. (Full disclosure: I do some consulting for SGR.) She also co-presented on “Concerns and Capacity for AI” with Julie Goodgame of the City of Tyler, Texas, at the 3CMA conference last September.

First thoughts: Do your due diligence

Jacque quoted Buckminster Fuller, the American futurist, who said, “We are called to be the architects of the future, not its victims.”

With that thought, some due diligence is required before we use AI in responsible and ethical ways, she says. What kind of preparation and discussion is needed before we put into practice the use of tools like ChatGPT and Bard, which can write custom essays and reports, and create images based on your descriptions and prompts?

“There are two (things) that I would say we would want to focus on. One is disclosure and the other is decision making,” Jacque says. “I think if we are open to being a disclosing-type of organization with transparency about the ways that we’re using artificial intelligence, and if we can promise the communities that we serve, that we are the decision makers, that nothing else or no one else is making decisions except for humans inside our own systems, then I think we started off on the right foot.”

The disclosure piece should be really easy, IMO. For the love of god, if the feds can do it (here’s the link again), anyone can do it. And I would maybe add a kind of disclaimer on the disclosure page, something we did in Round Rock back in early days of social media. We said we were going to try these new tools for six months. We were hoping for a healthy dialogue but if the trolls took over then all bets were off, and we’d pull the plug. Six months came and went. We frankly kinda forgot about it because the channels were working the way we hoped. I strongly suspect it will be the same with AI. That said, I still think it’s important to let the public know why you are using these news tools and if things don’t work out as planned then you’ll drop them or change how you’re using them.

The decision-making piece also needs to be stated out loud, early, and often. I think folks get nervous when they hear the government is using AI. (Skynet, anyone?) I also think they’ll calm down when they learn about the ways AI is delivering cost savings and efficiency, like with robot lawn mowers. That said, Julie noted some folks in Tyler were upset that a machine was doing the job a human could do, i.e., mow the grass at a park. In many cities, governments are among the community’s largest employers so folks may get understandably nervous about decreasing employment opportunities.

“People are certainly concerned about job displacement,” Jacque says, “and I think as a leader in your organization, you’ll have to speak to that, (that) it isn’t going to replace people in your organization, if you can honestly say that.”

Posting those kinds of statements on your website is important to maintaining trust and credibility. If you’re using these tools and haven’t disclosed it, you may be setting yourself up for some heartburn if you get questioned about them in public. To me, there’s no need to hide the fact you’re using them.

Here’s a boilerplate statement Jacque shared in the webinar. I think it’s a fantastic starting point and can be readily adapted to your organization.

Our goal is to be the community’s first and best source of information about our city on a daily basis and in times of urgent, risk, or crisis communication. The city’s communication and marketing team comprises writers, editors, storytellers, photographers, videographers, and designers. Unless otherwise designated, all images and textual content have been created by them for the city.

Some city departments and our communication team will be using generative artificial intelligence to help with idea generation, first drafts of basic information, and other content projects. However, all content published by the city will be written by and/or edited by city employees for accuracy and understanding. Have concerns? Please contact the chair of the city’s AI committee, [insert name/email here].

As you can see at the end of the statement, Jacque recommends pulling together a working group of employees to address issues that will invariably arise as we continue to find new uses for AI.

“In terms of due diligence, you want to have great knowledge sharing and meetings or committees that are talking about these things on an ongoing basis, especially for organizational users, and to follow that up with good education and training for anyone in the organization who is a designated user of artificial intelligence,” Jacque said. “These folks should know who one another are. They need to be part of a community of discussion, and they need good training and support for the work that they’re doing for you.”

The City of San Jose, California, is doing just that. Here is its IT Department’s Generative AI Guidelines. It’s also part of the newly formed GovAI Coalition, which includes 120 agencies sharing and collaborating on this new technology. Interpol has developed an AI Toolkit for law enforcement agencies.

“I think trust is probably one of the biggest things that we want to think about when we’re thinking about AI, because if we lose the trust of our residents and our audiences, then we are in a lot of trouble,” Jacque says. “And trust is everywhere. We talk about authenticity and empathy and things like that. And if those are values of our organizations, then trust is huge.”

To new readers: It’s a GGF Truism that your level of effectiveness in governing is directly proportional to your credibility.

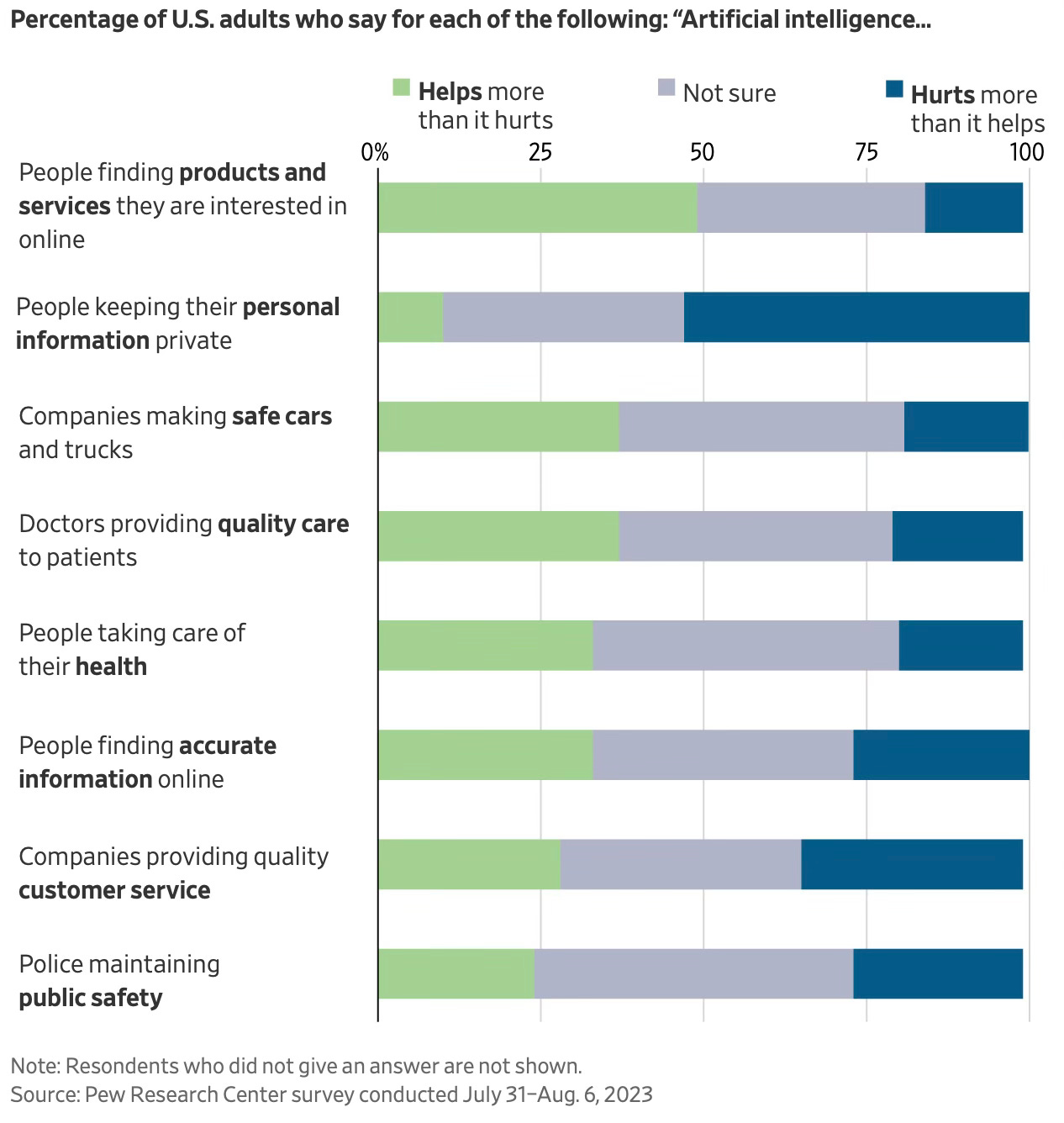

A large percentage of the public doesn’t really know what to think about AI right now, as the chart below suggests. So, the more light you can shine on how your agency is using AI, the better.

When using tools like ChatGPT, you need to be aware there is some bias built into how they work. Here’s why, according to a recent Wall Street Journal article answering some basic AI questions.

These powerful computer systems, called generative AI or large language models, are fed enormous amounts of information — hundreds of billions of words — to “train” them. Imagine if you could read pretty much everything on the internet, and have the ability to remember it and spit it back. That is what these AI systems do, with material coming from millions of websites, digitized books and magazines, scientific papers, newspapers, social-media posts, government reports and other sources.

These systems break the text into words or phrases and assign a number to each. Using sophisticated computer chips that form what are called neural networks — which mimic the neurons in a human brain — they find patterns in these pieces of text through mathematical formulas and learn to guess the next word in a sequence of words. Then, using technology called natural-language processing, they can understand what we ask and reply.

As Jacque notes, “some of those ideas and text and images are generated by people with bias, with racism, with sexism and other ‘isms’. And so, all of that is in the system that these compositors are pulling from. And we want to make sure that we are not entering that bias into content that we might be asking those systems to generate.”

About those hallucinations

A quick note about “hallucinations,” the term of art for when an AI makes up something but presents it as a fact. From the WSJ article about AI basics:

Hallucinations can arise when an AI system finds patterns in its training material that are irrelevant, mistaken or aren’t meaningful, something that experts call noise. “AI hallucinations are similar to how humans sometimes see figures in the clouds or faces on the moon,” according to IBM.

Hallucinations can trip up chatbot users. A Manhattan federal judge in June fined two lawyers who used ChatGPT to search for prior cases to bolster their personal-injury lawsuit. The cases they cited turned out to be fake.

Even AI promoters can get burned. In February, Google did an online promotion for Bard in which it asked the AI what discoveries the James Webb Space Telescope made. Bard responded that it took “the very first pictures” of a planet outside our solar system. In fact, a European telescope had done that many years earlier. “It’s a good example [of] the need for rigorous testing,” a Google executive said at the time.

Which brings us to accountability. It’s huge, Jacque told webinar attendees. I agree.

“That’s the decision-making piece that I talked about right at the beginning, where I feel like we have to say that our systems, our organizations, if we are using artificial intelligence for any of the work we’re doing, whether that’s generative AI for content, or whether that’s another kind of artificial intelligence, where we’re looking into how to optimize traffic systems or transportation or billing or any number of things that we have been using artificial intelligence for,” she said. “We have to be able to say, at the end of the day, humans are in the system. Humans are the decision makers. And if there are problems in the world, we’re not going to blame it on artificial intelligence. We’re going to still be accountable, and we’ll only have ourselves to blame if things go wrong.”

A final cautionary note is to be sure you aren’t adding proprietary or protected information into those systems when you’re using them. Any information you include in your prompt gets sucked into that ginormous database in the cloud, so be sure you’re not sharing something you shouldn’t.

Let’s keep exploring together

So that’s the start of the GGF conversation on AI. We’ll have more to come. I’m interested in the ways your agencies are using AI, so share some of the good stuff in the comments. I’m not the only one who’s curious. Our friends at the non-profit Alliance for Innovation (AFI) have a quick four-question survey on government use of AI.

If you want to learn about more gov uses of AI, SGR is hosting a free webinar at 11 a.m. CST Wednesday, Feb. 7, featuring Micah Gaudet, Deputy City Manager of Maricopa, Arizona. Learn more and register.

GovEphemera: Hits & Misses

Better governance through technology

Give a follow to the folks at GXFoundry, a government experience team providing digital design, engineering, and product ownership for public service teams in Franklin County, Ohio. They launched a Substack newsletter in December to ‘work out loud’ by “posting what we’re learning as we embrace digital agility, product ownership, new tools, user experience (UX) methods, and more. We want other government digital service teams to see what we’re doing just in case it’s useful to them.” If you’re a fan of innovative, customer-service driven gov IT, you’ll enjoy what they have to share. I especially appreciated the post on what they learned at FormFest 2023 (motto: Better Government, One Form At A Time.)

Like GGF, they see the need to restore trust as fundamental to everyone working in government. It’s even in their mission: “We forge digital experiences to build trust in Franklin County.”

Love it.

Dept. of Sigh

You might wonder how 10 percent of the $4.2 trillion in Covid funding was “misspent” and stolen. As a recent AP investigation puts it, there was no great secret code: “The grift was just way too easy.” The article quotes Dan Fruchter, chief of the fraud and white-collar crime unit at the U.S. Attorney’s office in the Eastern District of Washington: “Here was this sort of endless pot of money that anyone could access.”

Sigh.

We’ve got work to do, people

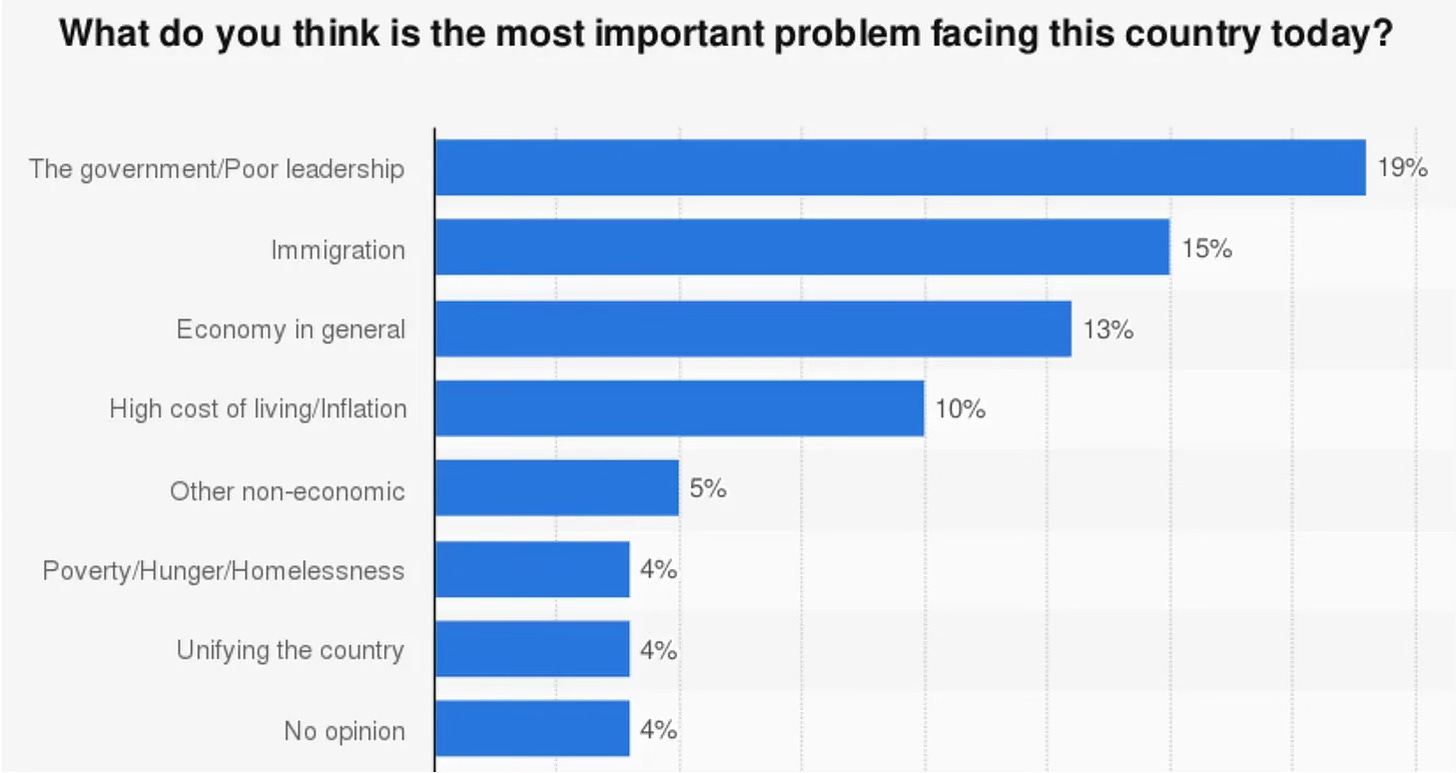

I picked this up from the guys at GX Foundry, who shared this depressing chart from Gallup.

This is why we GGF.

Fun Watch of the Month

The good people Down Under have figured out how to bridge the generation gap(s).

Onward and Upward.

Will,

I have refrained from responding to your essay because I object to AI

and realize that to do so is futile.

Its supporters have already won, without a fight.

AI is irresistible.

It a fast moving train that left the station before anyone was awake or ready.

I want to shout: Wait! but it is already way down the track

with a ton of eager professionals on board.

From what I have read so far about AI

I will not be getting on board.

As a writer, my calling is antithetical to this revolution

at least in the domain of writing.

My contribution to the AI conversation is this:

The very first assertion about AI is false.

AI CANNOT and DOES not write.

AI is capable only of manufacturing.

It manufactures text.

Manufacturing text is not writing!

Its product is a phony facsimile.

It's fake.

Our society has been duped.

Once you say "AI writes"

you have crossed the boundary

that separates the real from the artificial.

You have LOST the essence of what writing IS.

What is writing?

Writing is a creation of the human soul.

Writing is a creation of the human heart.

AI cannot create.

It cannot create because it HAS no soul. It HAS no heart.

It can only manufacture,

and pass off its artificial product as real.

My father was a writer.

He was the writer for the Cleveland City Planning Commission

in the 1950's.

He put his heart and soul into writing the urban renewal plans and reports

on Cleveland's efforts to provide better housing for its black citizens.

AI can't do what my father did.

AI is incapable of creating genuine inspiring meaning--

genuine heart and soul truth.

O I am sure it could synthesize and digest all my father's words

and mimic him with manufactured texts,

churning out the reports much faster and at far less expense.

But tell me, should I trust a city government that would DO that?

Just because they include assurances

that humans are in charge of the final product?

Deliver me from this travesty!

I would have to lie to myself in order to accept such subterfuge.